Measure performance in both fairness metric and

performance_and_fairness(x, fairness_metric = NULL, performance_metric = NULL)Arguments

| x | object of class |

|---|---|

| fairness_metric | fairness metric, one of metrics in fairness_objects parity_loss_metric_data (ACC, TPR, PPV, ...) Full list in |

| performance_metric | performance metric, one of |

Value

performance_and_fairness object.

It is list containing:

paf_data - performance and fairness

data.framecontaining fairness and performance metric scores for each modelfairness_metric - chosen fairness metric name

performance_metric - chosen performance_metric name

label - model labels

Details

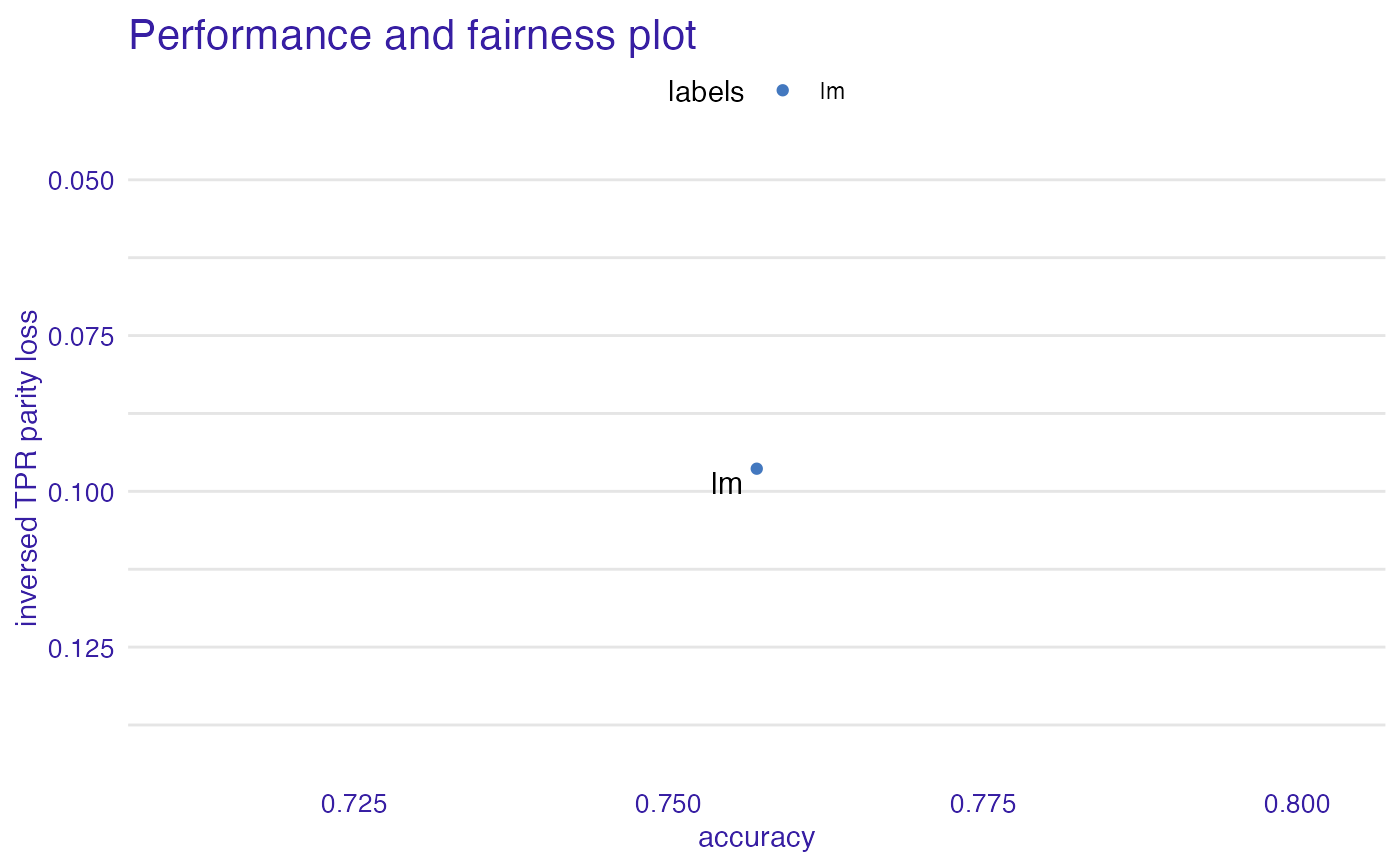

Creates perfomance_and_fairness object. Measure model performance and model fairness metric at the same time. Choose best model according to both metrics. When plotted y axis is inversed to accentuate

that models in top right corner are the best according to both metrics.

Examples

data("german")

y_numeric <- as.numeric(german$Risk) - 1

lm_model <- glm(Risk ~ .,

data = german,

family = binomial(link = "logit")

)

explainer_lm <- DALEX::explain(lm_model, data = german[, -1], y = y_numeric)

#> Preparation of a new explainer is initiated

#> -> model label : lm ( default )

#> -> data : 1000 rows 9 cols

#> -> target variable : 1000 values

#> -> predict function : yhat.glm will be used ( default )

#> -> predicted values : No value for predict function target column. ( default )

#> -> model_info : package stats , ver. 4.1.1 , task classification ( default )

#> -> predicted values : numerical, min = 0.1369187 , mean = 0.7 , max = 0.9832426

#> -> residual function : difference between y and yhat ( default )

#> -> residuals : numerical, min = -0.9572803 , mean = 1.940006e-17 , max = 0.8283475

#> A new explainer has been created!

fobject <- fairness_check(explainer_lm,

protected = german$Sex,

privileged = "male"

)

#> Creating fairness classification object

#> -> Privileged subgroup : character ( Ok )

#> -> Protected variable : factor ( Ok )

#> -> Cutoff values for explainers : 0.5 ( for all subgroups )

#> -> Fairness objects : 0 objects

#> -> Checking explainers : 1 in total ( compatible )

#> -> Metric calculation : 13/13 metrics calculated for all models

#> Fairness object created succesfully

paf <- performance_and_fairness(fobject)

#> Fairness Metric is NULL, setting deafult parity loss metric ( TPR )

#> Performace metric is NULL, setting deafult ( accuracy )

#>

#> Creating object with:

#> Fairness metric: TPR

#> Performance metric: accuracy

plot(paf)

# \donttest{

rf_model <- ranger::ranger(Risk ~ .,

data = german,

probability = TRUE,

num.trees = 200

)

explainer_rf <- DALEX::explain(rf_model, data = german[, -1], y = y_numeric)

#> Preparation of a new explainer is initiated

#> -> model label : ranger ( default )

#> -> data : 1000 rows 9 cols

#> -> target variable : 1000 values

#> -> predict function : yhat.ranger will be used ( default )

#> -> predicted values : No value for predict function target column. ( default )

#> -> model_info : package ranger , ver. 0.13.1 , task classification ( default )

#> -> predicted values : numerical, min = 0.06497024 , mean = 0.6993924 , max = 0.9978889

#> -> residual function : difference between y and yhat ( default )

#> -> residuals : numerical, min = -0.7234539 , mean = 0.0006076457 , max = 0.6197718

#> A new explainer has been created!

fobject <- fairness_check(explainer_rf, fobject)

#> Creating fairness classification object

#> -> Privileged subgroup : character ( from first fairness object )

#> -> Protected variable : factor ( from first fairness object )

#> -> Cutoff values for explainers : 0.5 ( for all subgroups )

#> -> Fairness objects : 1 object ( compatible )

#> -> Checking explainers : 2 in total ( compatible )

#> -> Metric calculation : 10/13 metrics calculated for all models ( 3 NA created )

#> Fairness object created succesfully

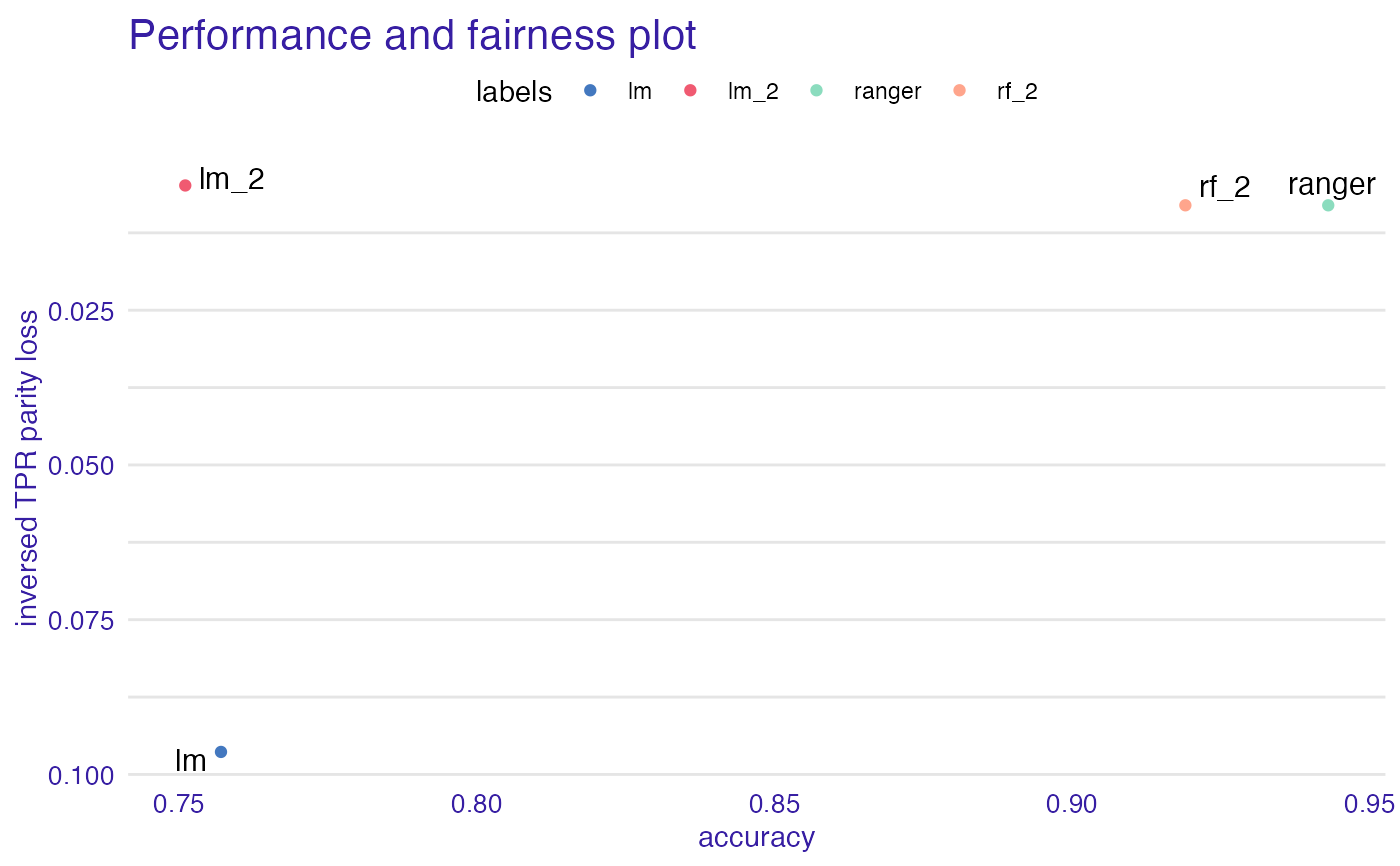

# same explainers with different cutoffs for female

fobject <- fairness_check(explainer_lm, explainer_rf, fobject,

protected = german$Sex,

privileged = "male",

cutoff = list(female = 0.4),

label = c("lm_2", "rf_2")

)

#> Creating fairness classification object

#> -> Privileged subgroup : character ( Ok )

#> -> Protected variable : factor ( Ok )

#> -> Cutoff values for explainers : female: 0.4, male: 0.5

#> -> Fairness objects : 1 object ( compatible )

#> -> Checking explainers : 4 in total ( compatible )

#> -> Metric calculation : 10/13 metrics calculated for all models ( 3 NA created )

#> Fairness object created succesfully

paf <- performance_and_fairness(fobject)

#> Fairness Metric is NULL, setting deafult parity loss metric ( TPR )

#> Performace metric is NULL, setting deafult ( accuracy )

#>

#> Creating object with:

#> Fairness metric: TPR

#> Performance metric: accuracy

plot(paf)

# \donttest{

rf_model <- ranger::ranger(Risk ~ .,

data = german,

probability = TRUE,

num.trees = 200

)

explainer_rf <- DALEX::explain(rf_model, data = german[, -1], y = y_numeric)

#> Preparation of a new explainer is initiated

#> -> model label : ranger ( default )

#> -> data : 1000 rows 9 cols

#> -> target variable : 1000 values

#> -> predict function : yhat.ranger will be used ( default )

#> -> predicted values : No value for predict function target column. ( default )

#> -> model_info : package ranger , ver. 0.13.1 , task classification ( default )

#> -> predicted values : numerical, min = 0.06497024 , mean = 0.6993924 , max = 0.9978889

#> -> residual function : difference between y and yhat ( default )

#> -> residuals : numerical, min = -0.7234539 , mean = 0.0006076457 , max = 0.6197718

#> A new explainer has been created!

fobject <- fairness_check(explainer_rf, fobject)

#> Creating fairness classification object

#> -> Privileged subgroup : character ( from first fairness object )

#> -> Protected variable : factor ( from first fairness object )

#> -> Cutoff values for explainers : 0.5 ( for all subgroups )

#> -> Fairness objects : 1 object ( compatible )

#> -> Checking explainers : 2 in total ( compatible )

#> -> Metric calculation : 10/13 metrics calculated for all models ( 3 NA created )

#> Fairness object created succesfully

# same explainers with different cutoffs for female

fobject <- fairness_check(explainer_lm, explainer_rf, fobject,

protected = german$Sex,

privileged = "male",

cutoff = list(female = 0.4),

label = c("lm_2", "rf_2")

)

#> Creating fairness classification object

#> -> Privileged subgroup : character ( Ok )

#> -> Protected variable : factor ( Ok )

#> -> Cutoff values for explainers : female: 0.4, male: 0.5

#> -> Fairness objects : 1 object ( compatible )

#> -> Checking explainers : 4 in total ( compatible )

#> -> Metric calculation : 10/13 metrics calculated for all models ( 3 NA created )

#> Fairness object created succesfully

paf <- performance_and_fairness(fobject)

#> Fairness Metric is NULL, setting deafult parity loss metric ( TPR )

#> Performace metric is NULL, setting deafult ( accuracy )

#>

#> Creating object with:

#> Fairness metric: TPR

#> Performance metric: accuracy

plot(paf)

# }

# }